“How do we get humans to trust in all this AI we’re building?”, asked Affectiva CEO Rana El-Kaliouby, at the prestigious NYT New Work Summit at Half Moon Bay last week. She had already assumed a consensus that trust-building was the correct way to proceed, and went on to suggest that, rather than equipping users and consumers with the skills and tools to scrutinize AI, we should instead gently coax them into placing more unearned faith in data-driven artifacts.

But how would this be accomplished? Well, Affectiva are “on a mission to humanize technology”, drawing upon machine and deep learning to “understand all things human.” All things human, El-Kaliouby reliably informed us, would include our emotions, our cognitive state, our behaviors, our activities. Note: not to sense, or to tentatively detect, but to understand those things in “the way that humans can.”

Grandiose claims, indeed.

The MIT-educated CEO went on to talk about how her technology could create a softer, cuddlier world of technology, filled with machines that have empathy and emotional intelligence capable of interpreting the full range of facial movements and jiving along with them. Reflecting emotions back to humans in a way that makes them seem more trustworthy, likable, and persuasive.

Sounds great, no? Finally, an AI that really “gets me.”

Not quite. In reality, we were being tutored to believe that something as infinitely complex as human emotion can be objectively measured (El-Kaliouby actually said this), codified, and – of course – commodified.

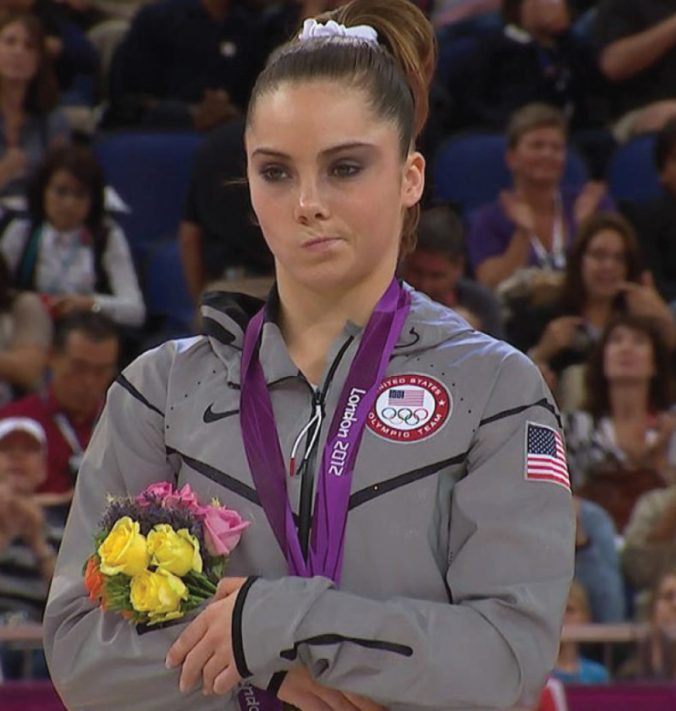

The trouble is, the very example she used served perfectly to undercut Affectiva’s bold assumptions. Asking “what if systems could tell the difference between a smile and a smirk?” – two very distinct emotional expressions using very similar facial muscles – El-Kaliouby displayed the picture below in illustration of the latter:

Is this a smirk?

If you cannot see the problem here then you probably agree with El-Kaliouby that this image portrays a smirking US gymnast. However, a quick Google search will tell you that to smirk is actually to “smile in an affected or smug manner” – in other words something much closer to this image….

This feels closer to a smirk.

So what El-Kaliouby actually proved, if she proved anything at all, is that even among humans – extraordinarily gifted emotion-reading experts – there is confusion and disagreement about which of our facial contortions correlate to which generalized emotion. Not to mention what that emotion actually means. And who knows how far such disagreement would extend when we take into account different cultures, generations, etc?

It’s not that Affectiva haven’t attempted to counter such confusion. To their credit, they have amassed a database of 7.6 million (consenting) faces from 87 locations around the world, all of whom are “emoting.” Their team is also commendably diverse. Nevertheless, as with every other technology, some serious thinking needs to be done in order to anticipate the ways in which things could go wrong.

What happens when an AI totally misreads a critical situation? We all know that fury doesn’t always manifest in a frown, and happiness does not always yield a smile. Even El-Kaliouby’s own example of a self-driving car using emotion AI to to test for attentiveness seems flawed; we’ve all met people who are capable of seeming “bright-eyed and bushy-tailed” even when they have secretly slumped into an attention-consuming trance.

Though El-Kaliouby’s video of Affectiva workers “mugging” for the camera – pulling a variety of cartoonish faces to “teach” the system – is sweet and well-meaning, this whole effort feels like a troubling simplification of something that is necessarily intricate and defies quantification.

Moreover, even if emotion-tracking technology were completely flawless, it still seems profoundly odd that we should be encouraging people into emotional exchanges with technologies that are are only very superficially emotional. Even when an AI knows, it does not understand. Should we really be leading people – particularly children and vulnerable adults – to believe that unthinking, distinctly unemotional technologies are actually capable of “getting” them? Is it wise to deliberately blur the line between who is capable of having vs. expressing empathy? In no realistic or foreseeable future could AI actually feel these emotions.

Could this whole exercise be a dangerous deceit? If there is even a shadow of doubt about potential psychological effects, you wouldn’t know it. We’re already talking about this mass experiment as though we all agreed that it’s absolutely an okay thing to do.

Rana El-Kaliouby wasn’t the only presenter rooting for the humanization of explicitly non-human artifacts. She was swiftly followed on stage by senior Alexa bod Dave Limp, who spoke of the voice assistant as though “she” were building out some of the key facets of personhood…like a personality and self-generated opinions. It feels like we’re back in Searle’s Chinese room.

There’s no doubt that some of these technologies have their place (for example, Rana El-Kaliouby briefly mentioned Affectiva’s work with autistic children) but the idea of trying to lull people into trusting AI or into sharing emotional experiences with what is essentially a piece of software seems like a disturbing experiment. Sure, there may well be some worthwhile pay-offs down the line, but at the moment these companies are working on untested hypotheses and we the public are the (paying and largely unknowing) guinea pigs.

Pingback: Five Views of AI Risk – Data Science Austria

Pingback: Five views of AI Risk - AI News