“Thoughts are free and subject to no rule. On them rests the freedom of man, and they tower above the light of nature”

Philippus Aureolus Paracelsus (1493-1541)

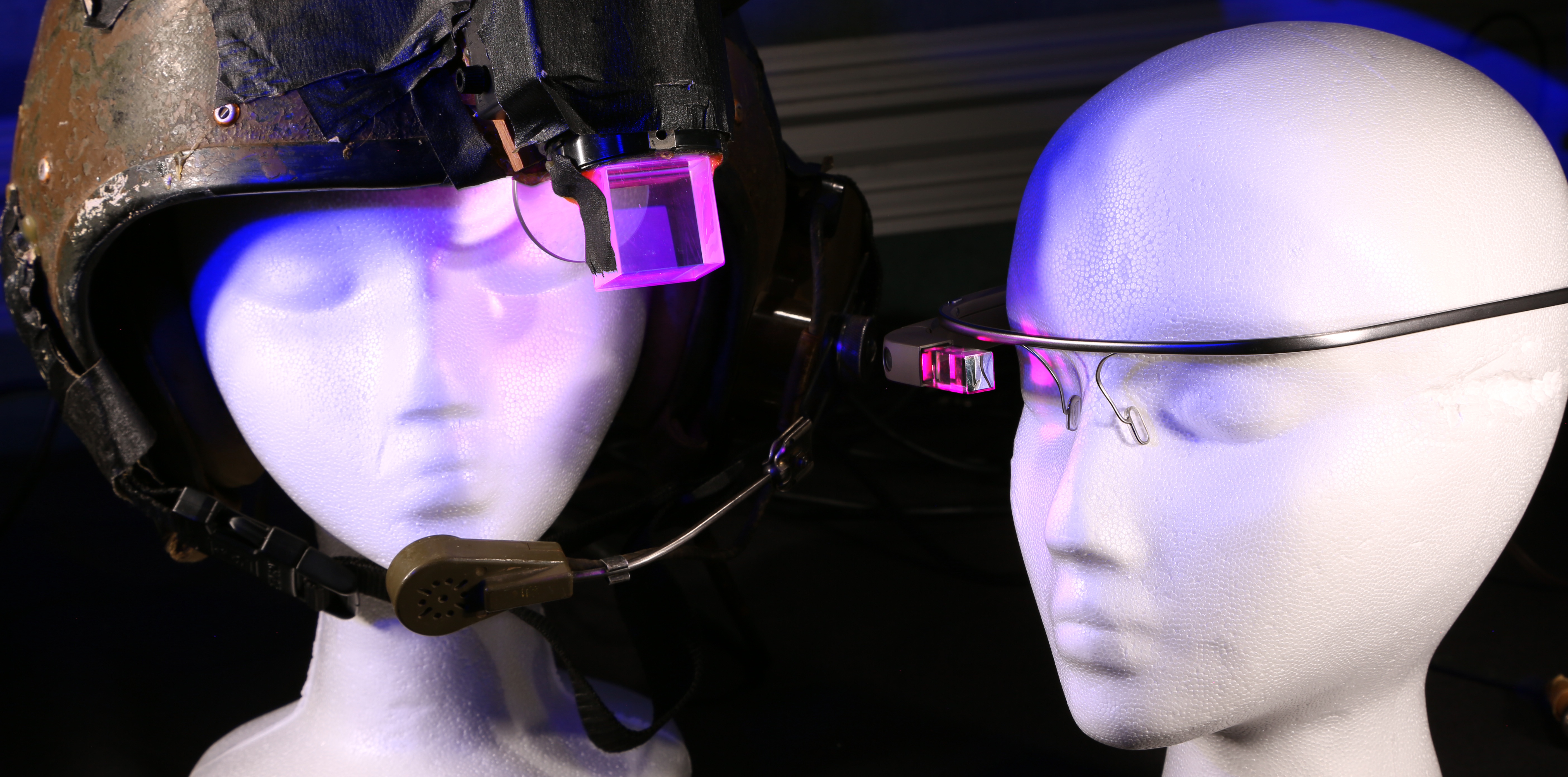

This week, Facebook Reality Labs revealed the latest piece of hardware gadgetry that it hopes will introduce eager consumers to a new world of augmented and mixed reality. The wristband is a type of technology known as a neural — or brain-computer — interface, and can read the electrical nerve signals our brain sends to our muscles and interpret them as instructions.

In other words, you don’t have to move. You can just *think* your movements.

You’d be forgiven for wondering if we’ve evolved too far..

A jazzy, high production video features grinning young San Francisco-type execs describing this new, immersive experience. They’ve invented it, and they’ll be damned if they aren’t going to foist it upon us. “The wrist is a great starting point for us technologically,” one chirps, “because it opens up new and dynamic forms of control.” Quite.

Continue reading