“Thoughts are free and subject to no rule. On them rests the freedom of man, and they tower above the light of nature”

Philippus Aureolus Paracelsus (1493-1541)

This week, Facebook Reality Labs revealed the latest piece of hardware gadgetry that it hopes will introduce eager consumers to a new world of augmented and mixed reality. The wristband is a type of technology known as a neural — or brain-computer — interface, and can read the electrical nerve signals our brain sends to our muscles and interpret them as instructions.

In other words, you don’t have to move. You can just *think* your movements.

You’d be forgiven for wondering if we’ve evolved too far..

A jazzy, high production video features grinning young San Francisco-type execs describing this new, immersive experience. They’ve invented it, and they’ll be damned if they aren’t going to foist it upon us. “The wrist is a great starting point for us technologically,” one chirps, “because it opens up new and dynamic forms of control.” Quite.

Here’s the sales pitch:

At the same time — this very week — members of the neuroscience community convened (virtually) in Chile to talk through concerns about the increasing prevalence of these kinds of neurotechnologies, with many calling for the establishment of fresh “neurorights” to protect users.

The conference, entitled “Neurorights in Chile: The Philosophical Debate”, might sound as esoteric as it gets, but in its content was broad ranging and highly relevant to today’s AI-forward tech landscape. Familiar topics like bias and discrimination were discussed with a neurotech lens, as were foreseeable problems for user autonomy and agency, (mental) privacy, and human identity.

It’s easy to scoff. But as one of the conference conveners, neurobiologist Professor Rafael Yuste, pointed out: some of the world’s wealthiest investors are betting on the interplay between artificial intelligence and the mind.

That the event was anchored in Chile is a result of the country’s impressive proactiveness when it comes to getting ahead of future problems with neural interfaces. At the end of last year the Chilean chamber approved a Bill to protect “the integrity and mental identity of the people in regards to the advancements of neurotechnology”. This pioneering amendment had Yuste’s hand in it, and “establishes that neural data have the same status as organs and penalizes their trafficking or manipulation, unless there is a medical indication.”

If you still think that those worried about neural interfaces should go back to polishing their tinfoil hats, then consider these recent OECD recommendations and this robust initiative from the Neurotechnology Center at world-renowned Columbia University. As far back as 2017 top-flight experts were identifying and publicizing key ethical considerations.

The message is clear, these technologies — which include Kernal and Elon Musk’s Neuralink, among others — present new ways for companies and governments to exploit and manipulate people, as well as new opportunities for hackers and other bad actors (Nature Index).

But how?

Well, the brain is the organ that produces the mind so many will find it difficult to accept that any level tracking and surveillance (outside of a medical context) can be conducted without violating a precious kind of mental privacy. This isn’t just about the collection of personal data, but the kinds of inferences that can be made from that data, given its nature.

Addressing the conference, UC Berkeley psychologist Professor Jack L. Gallant emphasized that “in the long run the largest volume of brain data will eventually come from non-invasive neurotechnologies, which are wearables that normal people just carry around with them on the street.”

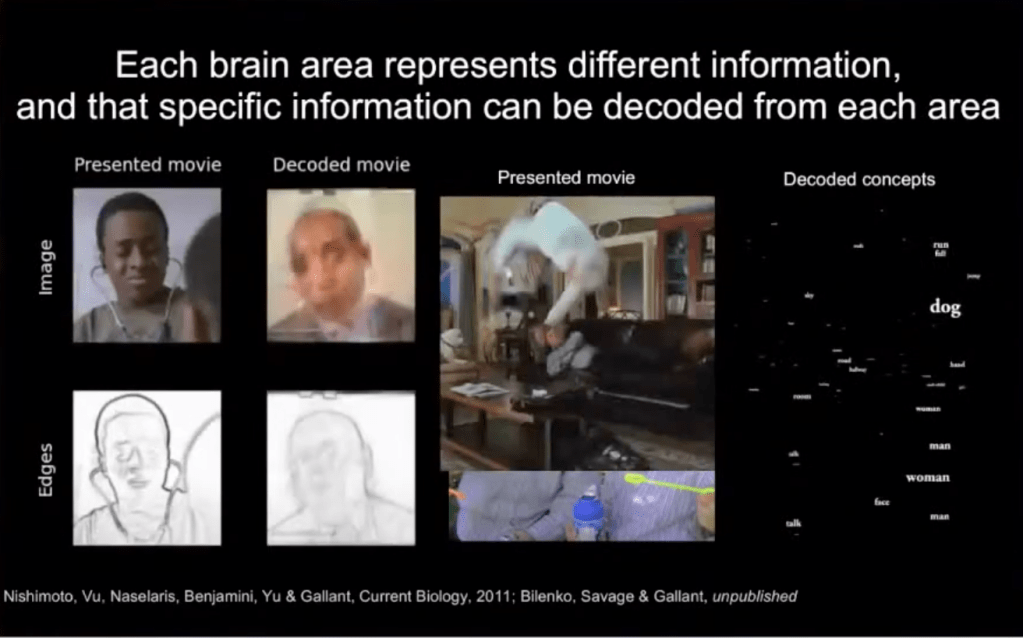

Focusing specifically on non-invasive tech (i.e. no implanted chip), Gallant went to produce a series of startling findings that demonstrate how brain signals can be decoded through inversion (see below), before reminding his audience that this is an area of great interest for Big Tech (as well as, no doubt, lawyers looking to subpoena witnesses, tyrannical governments, etcetera etcetera).

Speaker Dr. Marcello Ienca tempered concerns about this kind of decoding (or “mind reading”) happening imminently, given current capabilities, but asserted that mental privacy in the broad sense is a pressing concern for which “we do not have adequate regulatory and ethical infrastructures.”

This broad sense relates to the avalanche of brain data that will be harvested and aggregated (e.g. with GPS data, social media data, browsing history, etc.) to make associations between — for example — online behavior and brain activity. Ienca ultimately believes that mental privacy is a human right, arguing that it is a pre-requisite for the basic development and preservation of personhood, self-identity, and the right to self-determination.

We already worry about influence and autonomy in the context of data collection and subsequent AI deployment. The monitoring, measurement, analysis and commodification of our neural responses seems a step too far for many — including the neuroscientists who understand what can be deduced from these intimate flickerings and firings. Though we’re often asked to point to harmful consequences to validate concerns about ever-more-intrusive technology, as Ienca illustrates, there is something intrinsically violating about this encroachment on the mind.

It is believed that by examining our neural activity, researchers can establish impulses, thoughts, and intentions not even known to ourselves (see: readiness potential). This, and a tendency for mission creep, means that new consumer neurotech could lead us to give away more of ourselves than we could even know. Literally.

Its gratifying to see that these conversations are in full flow — and some governments and intergovernmental bodies are establishing where the red lines should be. Nevertheless, its obvious that much greater and more intensive scrutiny is needed worldwide to ensure that we preserve critical and life-enhancing aspects of medical neurotech while establishing bold restrictions on the brazen and autonomy-stifling use of its commercial counterpart.