A “Virtual” or “Digital” Human. Credit: Digital Domain

The #AIShowBiz Summit 3.0 – which took place last month – sits apart from the often dizzying array of conferences vying for the attention of Bay Area tech natives. Omnipresent AI themes like “applications for deep learning”, “algorithmic fairness”, and “the future of work” are set aside in preference for rather more dazzling conversations on topics like “digital humans”, “AI and creativity”, and “our augmented intelligence digital future.”

It’s not that there’s anything wrong with the big reoccuring AI themes. On the contrary, they are front-and-center for very good reason. It’s that there’s something just a little beguiling about this raft of rather more spacey, futuristic conversations delivered by presenters who are unflinchingly “big picture”, while still preserving necessary practical and technical detail.

Yet, new AI themes often deliver new AI concerns. In this case, these issues fell into two distinct camps: the completely unintended consequences of AI creativity, and the potentially problematic consequences of very deliberate action.

Let me explain.

In a morning session, Senior Director at Qualcomm, Paul Costello made a prediction that aligns with many other forecasts we’re hearing at the moment: augmented reality is about to be the next big platform. In the near future, he promised, more AR-enabled smart glasses will ship than smart phones and with this shift, “brand new real estate will open up for advertisers and merchandisers.” And the preparations are already underway…

Indeed, the questionable practice of tracking and monitoring internet users is about to take steroids as AR and VR solutions allow retailers and other interested parties pack-out their already overflowing databanks with granular biometric information from surveillance like eye-tracking. This add-on technology promises not only to identify/authenticate AR users, but also to supply companies looking to exploit superior knowledge about user preferences with more live information, like “real-time gaze tracking” . This allows them (I assume) to dynamically adapt the content served to persuadable users in order to optimize sales.

In theory, we consumers get a more bespoke product. In reality, we’re being understood, predicted, and potentially even determined by “advertisers and merchandisers” who care nothing for our personal objectives, and everything about doing whatever it takes to sell products and services to boost their bottom line.

The second kind of poor consequences seemed to fall out of a perfectly innocent-seeming technology potentially becoming weaponized (as opposed to ethically dubious technology being used for its purpose). For example, the astoundingly sophisticated cinematic technology that allows makers to convincingly recreate and animate a real human face remotely, opening up new and terrifying opportunities for mass deception.

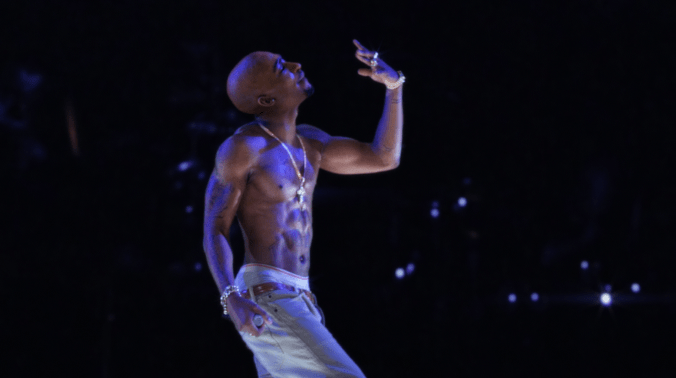

The incredibly talented (and refreshingly candid) John Canning of Digital Domain, the visual effects and digital production company responsible for this tool, admitted to an anxious audience member that problems could well arise from the abuse of the technology responsible for so-called “digital humans” – particularly when these AI imposters are based on real people.

And there is no rowing back now we know this kind of remote animation of “fake faces” is possible. It is simply left to us as concerned makers or citizens to anticipate and block any malicious use – like the impersonation of world leaders before their populations, parents before their unsuspecting children, and authority figures before anyone who might reveal important personal information to a recognizable figure, like law enforcement.

Hologram 2Pac. Credit: Digital Domain.

With technology like this on the table, there are many many questions to ask. For instance, is it okay to reanimate the dead (hint: they already have)?

Ultimately, all kinds of AI-driven technology will have to be scrutinized for misuse, and currently the louder conversation excludes instances like this. Perhaps because they still feel rather too Hollywood. But we ignore oppressive or deceptive uses of tech at our own risk. Let’s not wait for the horses to bolt before addressing the ways any/all intelligent products could function to the detriment of individuals and society.